Building Production-Grade RAG in 2025: From Demo to Enterprise Reality

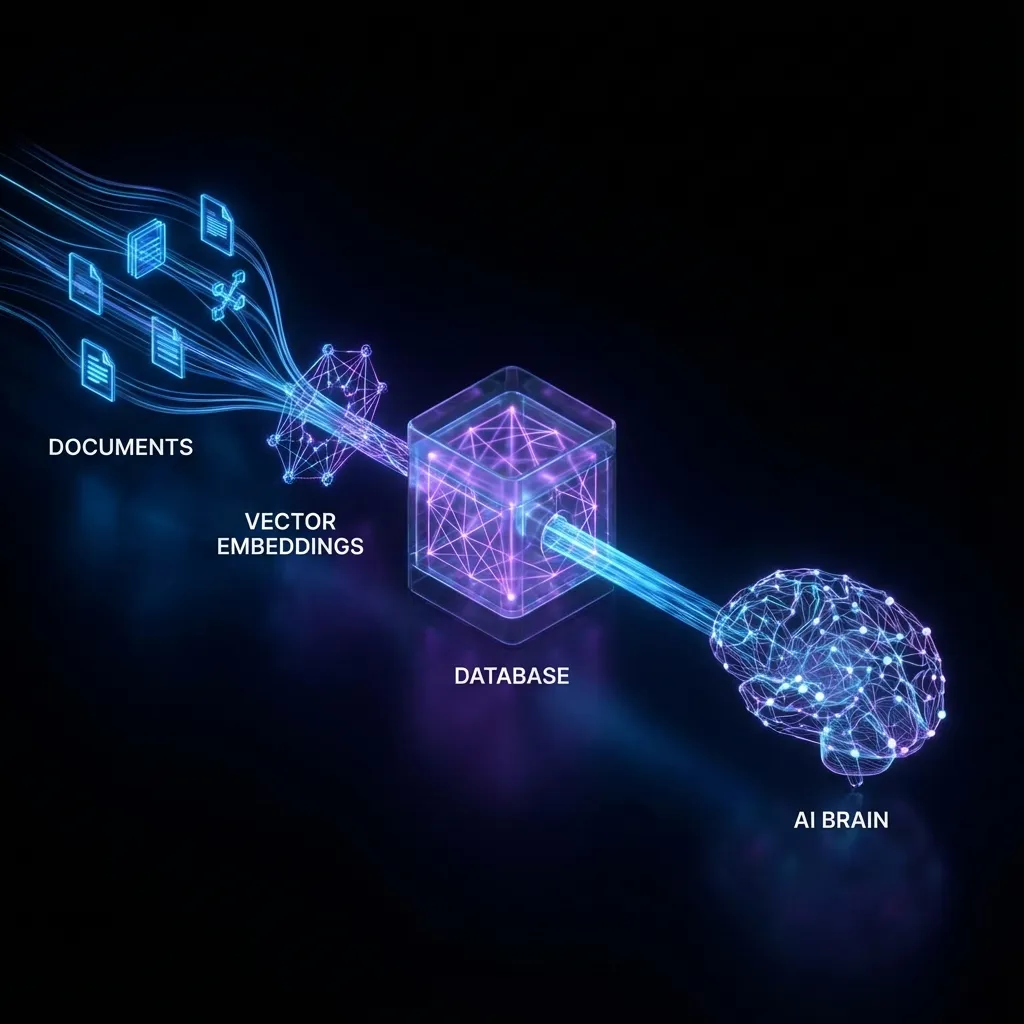

Over the past year, Retrieval-Augmented Generation (RAG) has rapidly evolved from a novel academic concept into the standard architecture for enterprise AI applications. For today’s developers, building a basic RAG demo with LangChain or LlamaIndex is trivial: drop a PDF, chunk it, index it in a vector store, and have ChatGPT answer questions.

However, the nightmare begins when you try to push that demo into a production environment—especially when facing complex enterprise documents with inconsistent formatting and dense technical content. High hallucination rates, “relevant but useless” retrieval results, and unacceptable Time to First Token (TTFT) are the hallmarks of the “RAG Gap.”

Crossing this gap requires obsessive engineering. This article summarizes my hands-on experience building production-grade RAG systems using the 2025 tech stack. It’s not just about choosing the right LLM; it’s about building a robust, high-quality data pipeline.

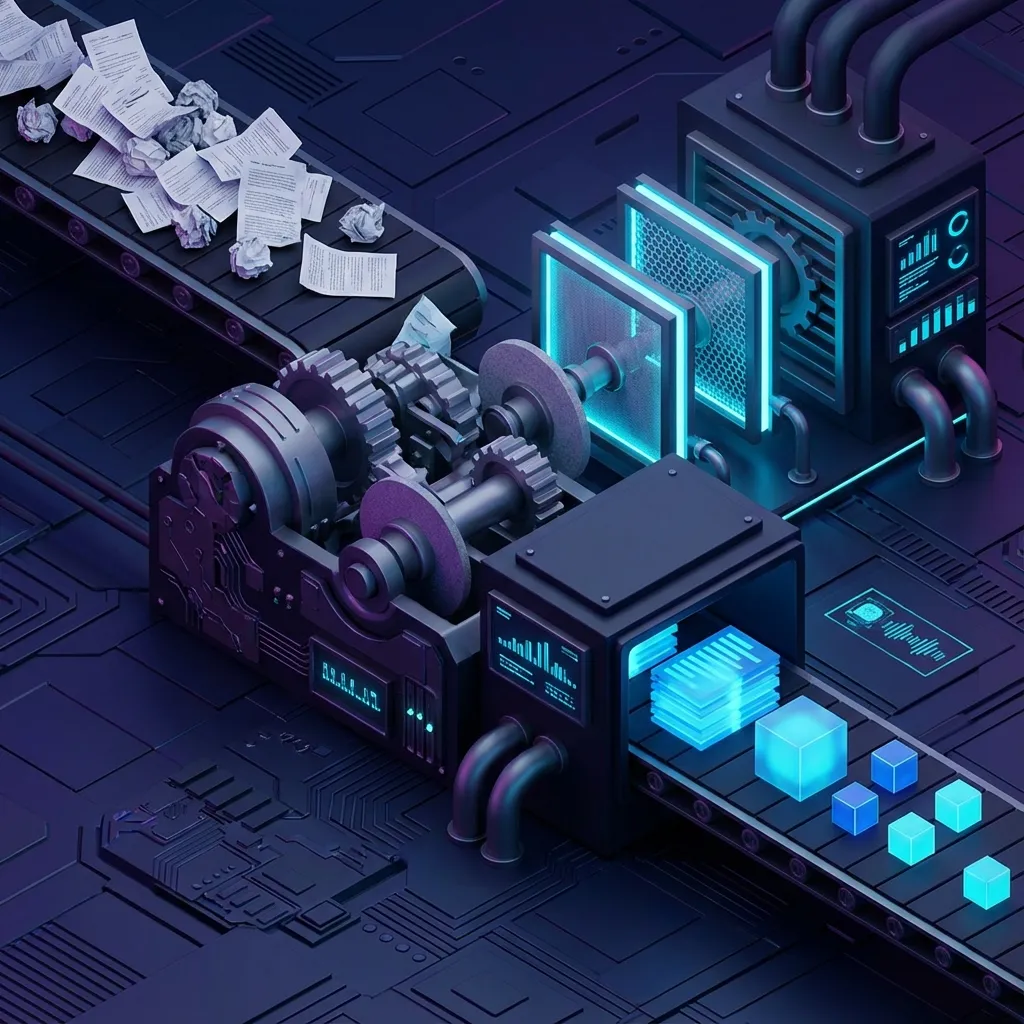

1. Garbage In, Garbage Out: Data Cleaning is the Soul of RAG

In a RAG system, the quality of retrieval sets the ceiling for generation. Many developers spend weeks fine-tuning embedding models but ignore the most fundamental step: data quality.

Production data is rarely clean Markdown. You’re dealing with scanned PDFs, chaotic PPTs, Word docs with nested headers/footers, and complex Excel spreadsheets.

The PDF Parsing Dilemma

Standard Python libraries like PyPDF2 extract linear text streams. They ruthlessly break tables, confuse multi-column layouts, and treat page numbers as body text.

2025 Best Practices:

- Vision-Language Model Parsing: For complex scans or diagrams, traditional OCR is insufficient. The current trend is to use GPT-4o or specialized layout-aware models (e.g., LlamaParse, Unstructured.io) to reconstruct page structure.

- Table Reconstruction: Tables are the Achilles’ heel of RAG. Without specialized handling, flattened table data loses its context. You must convert tables to Markdown or provide natural language summaries before embedding.

The Gold Mine of Metadata

Don’t just store text. Before chunking, extract as much metadata as possible:

- Hierarchy: Which H1/H2 header does this chunk belong to?

- Recency: Publication or last updated date.

- Business Context: Department, security clearance, or related product lines.

This metadata is primarily used for Pre-filtering. Before performing vector search, narrow the scope with a SQL-like filter (e.g., WHERE department = 'HR' AND year > 2023). This reduces noise, improves accuracy, and handles access control—ensuring sensitive data doesn’t leak to unauthorized users.

2. Intelligent Chunking: Moving Beyond Characters

RecursiveCharacterTextSplitter is where most people start, but mechanical cutting (e.g., every 500 characters) is disastrous in production.

Imagine a critical business rule: “If the user is overdue by 30 days, then… (cut) …terminate service and send a legal notice.” If the cut happens right after “then,” the retrieval might only recurse the second half, leading the LLM to hallucinate that any overdue user should receive a legal notice.

Semantic Chunking

In 2025, the industry has pivoted toward Semantic Chunking. Instead of fixed lengths, we use embedding models to calculate the similarity between adjacent sentences.

- Iterate through every sentence in the document.

- Calculate the cosine similarity between sentence N and N+1.

- Where similarity drops significantly (indicating a topic shift), a break is created.

This ensures every chunk is a semantically complete concept, vastly improving retrieval precision.

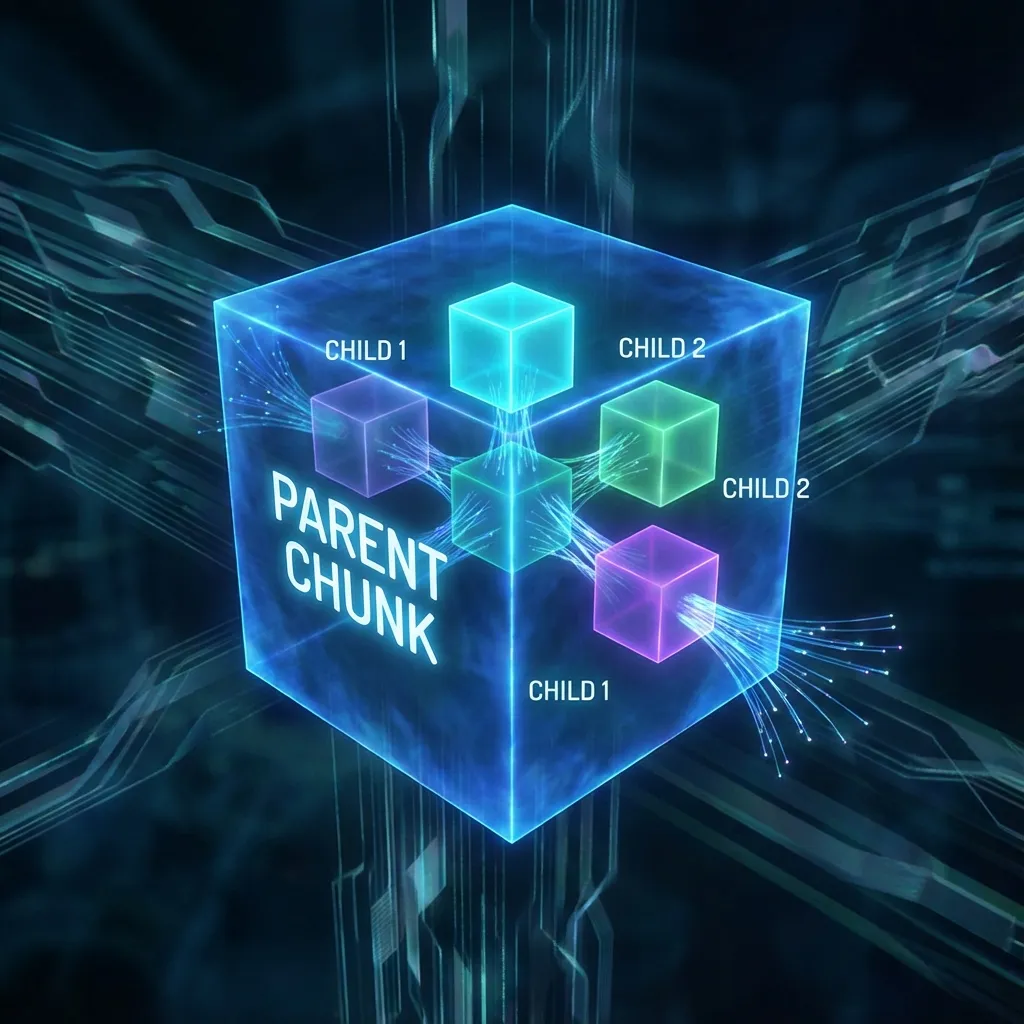

Small-to-Big (Parent-Child Indexing)

This is a highly effective, yet often overlooked strategy that solves the tension between “retrieval granularity” and “generation context.”

- Retrieval Granularity: For accurate matching, chunks should be small and focused.

- Generation Context: For high-quality answers, the LLM needs the full surrounding context.

The Solution:

- Divide documents into large Parent Chunks (e.g., 2000 tokens).

- Split each parent into smaller Child Chunks (e.g., 200 tokens).

- Index only the child chunks.

- When a child chunk is retrieved, serve the entire parent chunk to the LLM.

This maintains sharp retrieval focus while providing the rich context needed for a superior answer.

3. Hybrid Search: Vectors are Not a Silver Bullet

Dense retrieval (vectors) is great at matching abstract semantics—e.g., searching for “connection issues” and finding “check router settings.” However, for proper nouns, exact numbers, or error codes, it often fails compared to traditional keyword search.

Try searching for a specific error code like Error 0x80042109 in a pure vector store. You’ll likely get a bunch of documents about “unknown errors” rather than the specific one containing that exact string.

Production-grade systems must use Hybrid Search:

- Dense Retrieval: Using embedding models (like OpenAI text-embedding-3-small or BGE-M3) for semantic similarity.

- Sparse Retrieval: Using the BM25 algorithm for exact keyword matching.

- Reciprocal Rank Fusion (RRF): Merging the results from both paths.

RRF is a simple yet powerful algorithm for rank merging: $$ Score(d) = \sum \frac{1}{K + rank(d)} $$ It focuses on the relative rank rather than absolute similarity scores, perfectly normalizing different scoring systems.

4. Re-ranking: Your Final Line of Defense

To ensure high recall, we typically retrieve the Top 20 or even Top 50 chunks. But feeding 50 chunks to an LLM is a bad idea:

- Token Cost: Costs skyrocket and latency increases.

- Lost in the Middle: LLMs tend to ignore information in the middle of a large context window.

Introducing a Cross-Encoder Re-ranker is the most cost-effective way to boost performance. Initial retrieval relies on Bi-Encoders for speed. A Re-ranker (like Cohere Rerank or BGE-Reranker) performs a deep semantic interaction between the Query and each Document to give a highly precise relevance score.

The Pipeline:

- Hybrid search retrieves 50 candidate documents.

- The Re-ranker scores all 50.

- Truncate the list based on a threshold (e.g., score > 0.7) and serve only the Top 5 to the LLM.

While it adds a few hundred milliseconds of latency, the improvement in RAG quality is existential.

5. Continuous Evaluation: Stop Using “Vibes”

If you’re still evaluating your RAG system based on “asking a few questions to see if it feels right,” you’re not engineering; you’re guessing. Changing a chunking parameter might fix one issue but break ten others.

You need an automated evaluation pipeline (RAGOps):

- Golden Dataset: A representative set of 50-100 QA pairs (Query + Ground Truth Answer + Context).

- Auto-Scoring: Use frameworks like Ragas or TruLens that use GPT-4 as a “judge” to calculate core metrics:

- Context Precision: Do the retrieved chunks actually contain the answer?

- Faithfulness: Is the answer derived from the context, or did the LLM hallucinate?

- Answer Relevance: Does the answer directly address the user’s query?

Every code change or parameter tweak should run through this automated regression test before deployment.

Summary

There is no silver bullet for building production-grade RAG. It is a war of engineering details—from the “dirty work” of data cleaning to the algorithmic nuances of hybrid search and the rigors of RAGOps.

Don’t blindly chase bigger models. Fix your data pipeline first. In 2025, Data Engineering is the new Prompt Engineering.