Deep Dive into RAG: Hybrid Search and Re-ranking

In our previous article, we explored the macro-architecture of production-grade RAG. Today, we’re diving into the deep end, focusing on the most critical and challenging component of any RAG system: Retrieval.

Many developers face this common frustration when deploying RAG: “I’ve chunked my documents perfectly and stored them in ChromaDB, but when a user asks for ‘Product Model X-200,’ the embedding search returns an irrelevant paragraph about ‘ISO 9001 certification’ instead of the actual spec sheet.”

The root cause is simple: Embedding models (Bi-Encoder models) excel at capturing semantic similarity but often struggle with “Exact Matching” (e.g., part numbers, names, or code IDs).

To solve this, we need to deploy two heavy-duty techniques: Hybrid Search and Re-ranking. This article cuts through the hype to provide the code and algorithmic principles you need to build a high-precision retrieval pipeline.

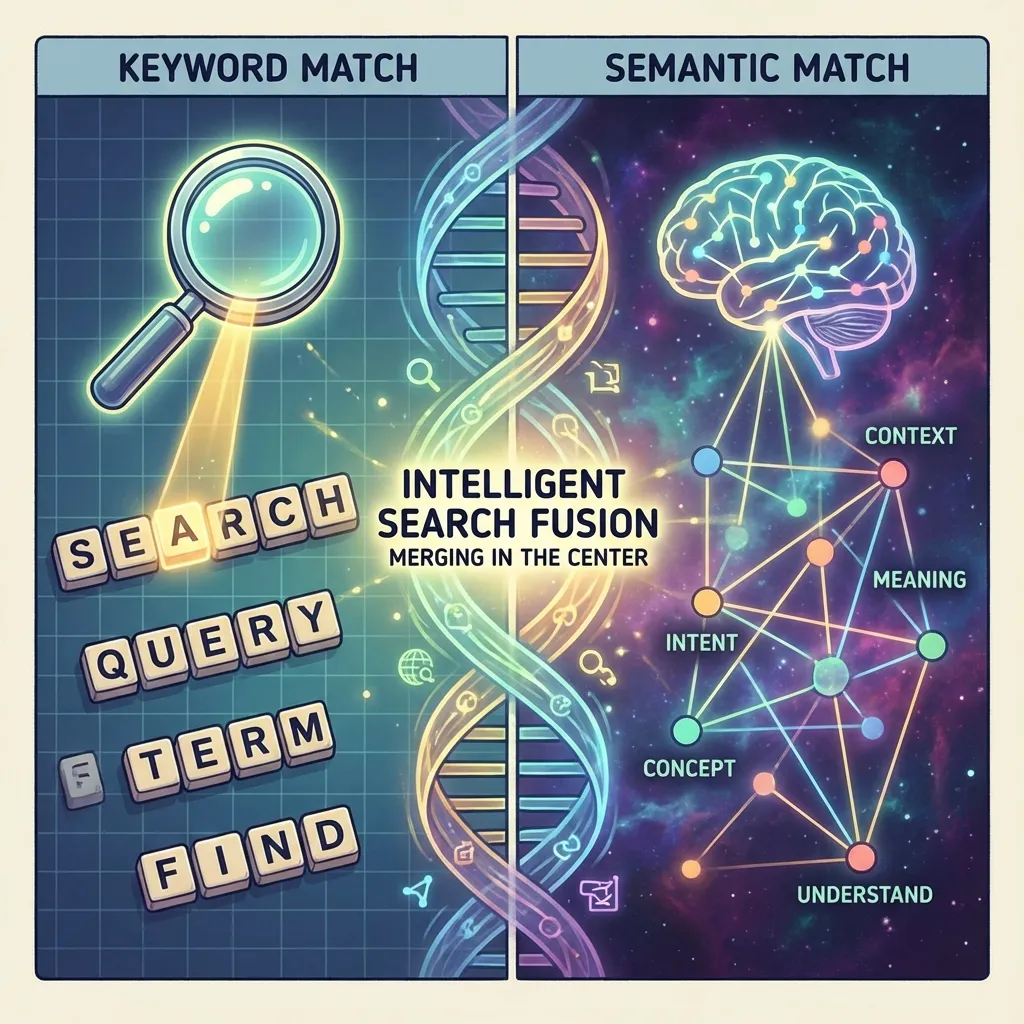

1. Hybrid Search: The Power of Combination

The core philosophy of Hybrid Search is “don’t put all your eggs in one basket.” It combines two fundamentally different retrieval technologies:

- Dense Retrieval: Calculating cosine similarity of embedding vectors. Excels at understanding “intent.”

- Sparse Retrieval: Inverted indices based on the BM25 algorithm. Excels at “exact matching.”

Why combine them?

Consider a user query: “How to fix Error 502 Bad Gateway?”

Vector Search Perspective: It sees “fix,” “error,” and “gateway.” It might return a general article on “server maintenance,” which is semantically related but might not mention the specific 502 code at all. This is “relevant but useless.”

BM25 Perspective: It’s a literal matching machine. it fixates on the “502” token. It is highly likely to locate the specific troubleshooting manual that contains the “502” string.

Hybrid Search Perspective: It understands that you want to fix a fault (Vector’s job) and locks onto the specific “502” feature (BM25’s job). Only by combining both can you guarantee high Recall.

Practical Implementation: RRF Fusion with LangChain

How do you merge results from two different scales (vectors are usually 0.7-0.9 cosine similarity, while BM25 scores are absolute values like 10-20)? The most robust algorithm is RRF (Reciprocal Rank Fusion). It ignores the raw scores and focuses entirely on the ranking.

The formula: $$ RRFscore(d) = \sum_{r \in R} \frac{1}{k + r(d)} $$ Where $k$ is a constant (usually 60) and $r(d)$ is the document’s rank in that specific retrieval path.

Here is the Python implementation logic:

from langchain.retrievers import EnsembleRetriever

from langchain_community.retrievers import BM25Retriever

from langchain_community.vectorstores import FAISS

from langchain_openai import OpenAIEmbeddings

# 1. Initialize the two Retrievers

# Assume 'docs' is your list of split Documents

bm25_retriever = BM25Retriever.from_documents(docs)

bm25_retriever.k = 50 # Recall top 50 via sparse search

embedding = OpenAIEmbeddings()

vectorstore = FAISS.from_documents(docs, embedding)

vector_retriever = vectorstore.as_retriever(search_kwargs={"k": 50}) # Recall top 50 via vector search

# 2. Initialize the Hybrid Retriever (EnsembleRetriever uses RRF by default)

ensemble_retriever = EnsembleRetriever(

retrievers=[bm25_retriever, vector_retriever],

weights=[0.5, 0.5] # Weights can be tuned; 50/50 is a good start

)

# 3. Execute Retrieval

docs = ensemble_retriever.invoke("How to fix Error 502?")

# Returns a deduplicated and re-ranked list of top resultsIn production, if you use platforms like ElasticSearch or Milvus, they have Hybrid Search built-in, following these same principles.

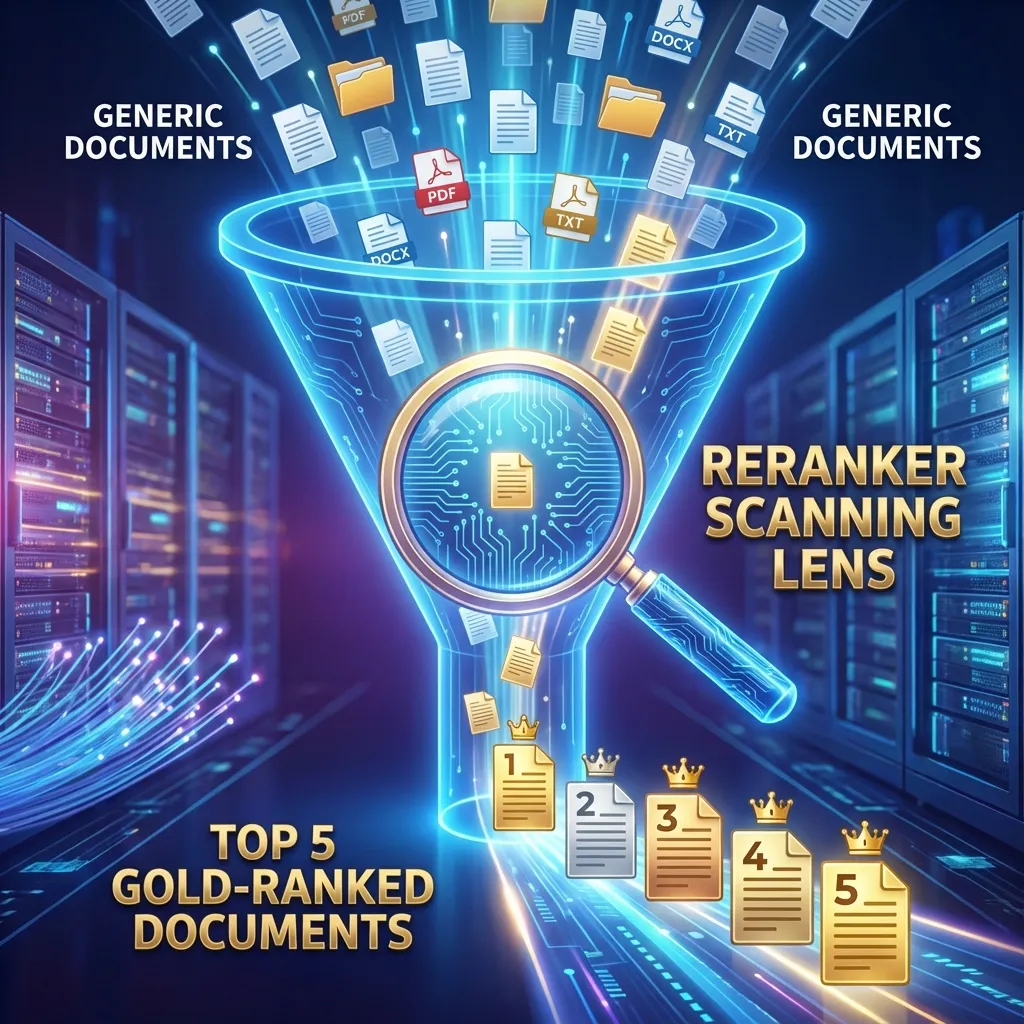

2. Re-ranking: Surgical Precision

After Hybrid Search, we usually have a candidate pool of Top 50 or even Top 100 documents. Crucial note: Never throw all 50 documents directly into the LLM.

Here’s why:

- Noise Interference: Out of 50, only 3 might be truly relevant. The other 47 are distractions that significantly degrade the LLM’s reasoning (the Distraction Issue).

- Sky-high Costs: Tokens are expensive. Feeding 10k extra tokens per request will blow up your API bill.

- Increased Latency: Longer contexts mean slower Time-To-First-Token (TTFT).

This is where the Re-ranker (Cross-Encoder model) comes in.

Bi-Encoder vs. Cross-Encoder

- Vector search uses a Bi-Encoder: Queries and Documents are encoded independently into vectors. This is blazingly fast (milliseconds) and perfect for searching through millions of records (Coarse-stage retrieval).

- Re-ranking uses a Cross-Encoder: It joins the Query and the Document together (e.g.,

[CLS] Query [SEP] Document [SEP]) and feeds them into a BERT-like model for deep Full-Attention interaction. It can judge with extreme precision whether “this specific sentence answers this specific question.” It’s slower (hundreds of milliseconds) but vastly more accurate (Fine-stage re-ranking).

Model Selection and Implementation

Some of the best performing Re-rankers today include:

- BGE-Reranker-v2-m3: The powerhouse of the open-source community with excellent multilingual support.

- Cohere Rerank API: A SOTA commercial solution. No need to manage GPU resources—perfect for startups.

- Jina Reranker: Another strong contender with support for extremely long contexts.

Using HuggingFace + BGE-Reranker:

from sentence_transformers import CrossEncoder

# Load model (GPU deployment recommended)

model = CrossEncoder('BAAI/bge-reranker-v2-m3', max_length=512)

def rerank_documents(query, retrieved_docs, top_k=5):

# Construct input pairs: [[query, doc1], [query, doc2], ...]

pairs = [[query, doc.page_content] for doc in retrieved_docs]

# Predict scores

scores = model.predict(pairs)

# Pair docs with scores

doc_score_pairs = list(zip(retrieved_docs, scores))

# Sort docs descending by score

doc_score_pairs.sort(key=lambda x: x[1], reverse=True)

# Set an empirical threshold for relevance (optional)

filtered_results = [

doc for doc, score in doc_score_pairs

if score > 0.5

]

return filtered_results[:top_k]

# Chain this after the ensemble_retriever

final_docs = rerank_documents("How to fix Error 502?", docs)3. The Complete Production Pipeline

A mature RAG retrieval pipeline should look like this:

- Query Rewrite: If a user asks “How much is it?”, use conversation history to rewrite the query to “What is the price of the iPhone 15 Pro Max?”

- Hybrid Retrieval (Coarse-stage):

- Parallel Vector Search (Top 50).

- Parallel BM25 Search (Top 50).

- RRF Fusion to get a unique Top 60.

- Re-ranking (Fine-stage):

- Use a Cross-Encoder to score the Top 60.

- If the top-scoring doc is still below a threshold (e.g., < -5), trigger a “Refusal” mechanism—tell the user “I couldn’t find relevant information in the knowledge base” rather than hallucinating.

- Context Construction: Slice the Top 5 and feed them into the Prompt.

4. Key Takeaways and Optimization Tips

- Is Re-ranking too slow? Cross-Encoders are heavy. If you’re latency-sensitive, consider ColBERT architectures (like Jina-ColBERT), which preserve the precision of Cross-Encoders with speeds closer to Bi-Encoders.

- Multilingual Support: If your docs are in English but your users ask in Spanish, BM25 will fail. You’ll need a “Query Translation” step before retrieval.

- Don’t Hardcode Thresholds: Re-ranker logits might not be normalized. Run a test dataset, observe the score distributions between positive and negative samples, and set a threshold based on statistical evidence.

Summary

If your RAG system is consistently providing off-target answers, don’t just throw a larger LLM at it. According to the law of diminishing returns, you should check your retrieval pipeline first.

Retrieval is like prepping ingredients for a chef. If you can use Hybrid Search and Re-ranking to throw out the “rotten leaves” (irrelevant docs) and provide only the “premium wagyu” (precise context), even a standard chef (a smaller LLM) can cook up a masterpiece.