Full-Stack Architecture 2025: Why We’re Returning to Pragmatism

As a full-stack developer, the most exhausting part of the last few years hasn’t been writing the code—it’s been the technology selection.

On one hand, you have the Kubernetes microservices ecosystem, where every service requires a Dockerfile, Helm Chart, and Service Mesh configuration. On the other, the AWS/Vercel Serverless stack offers speed but brings challenges like cold starts, connection pool exhaustion, and vendor lock-in.

By 2025, the tide has turned. With the maturity of tools like Drizzle ORM, htmx, and Next.js Server Actions, the industry is shifting from “blind decomposition” back to “pragmatism.” We are no longer designing architectures to “be like Google”; we are designing to deliver like a high-velocity independent builder.

1. Microservices are Dead? Long Live the Modular Monolith

Looking back at the last decade, microservices were over-mythologized. I’ve seen 5-person teams aggressively split their systems into 20+ microservices, only to end up in:

- Developer Hell: Changing one field requires touches across three repositories.

- Debugging Hell: Spending hours in distributed tracing just to find a simple bug.

- Transaction Hell: Simple ACID transactions replaced by fragile TCC or Saga eventual consistency patterns.

The primary architecture of 2025 is the Modular Monolith.

What is a Modular Monolith?

You develop in a single repository and deploy it as a single unit (e.g., one Docker container or one Serverless Function). However, your code has strict logical boundaries.

In frameworks like NestJS or Spring Boot, this means you have modules/auth, modules/payment, and modules/order.

The golden rule: No circular dependencies. The Order module cannot directly import from the User service; it must communicate through a public interface.

This gives you the best of both worlds:

- Seamless Developer Experience: IDE “Go to Definition” works, refactoring is trivial, and there is zero network latency between components.

- Simple Deployment: A single CI/CD pipeline and one environment to manage.

- Scalability: If your Payment module ever actually hits a traffic peak requiring independent scaling, its clear boundaries make it easy to rip out and spin up as a microservice.

Shopify, GitHub, and Stack Overflow ran on massive monoliths for years. If it works for them, it will almost certainly work for you.

2. Serverless Databases & Scalability

Previously, the biggest pain point of Serverless was Connection Pool Exhaustion.

When AWS Lambda scales to a thousand instances simultaneously, each one attempts to connect to a traditional PostgreSQL instance. Postgres connections are heavy, process-level resources. A default instance might only handle a few hundred. The result? Total database collapse.

To solve this, we were forced into complex setups with PgBouncer or expensive database proxies.

Now, Neon, PlanetScale, and Turso (SQLite) have fundamentally changed the landscape.

HTTP is the New TCP

These next-gen databases provide HTTP-based APIs or intelligent WebSocket proxies.

- Neon: Built on Postgres with a decoupled compute/storage architecture. Scale to zero when idle, with virtually unlimited connections.

- Turso: Based on SQLite and LibSQL. It turns your database into a file replicated globally via HTTP. This allows you to push data to the Edge, right next to your users.

Accessing a database in an Edge Function is now as simple as:

// Drizzle ORM + Turso

import { drizzle } from 'drizzle-orm/libsql';

import { createClient } from '@libsql/client';

const client = createClient({ url: process.env.DB_URL, authToken: process.env.DB_TOKEN });

const db = drizzle(client);

// This query runs over HTTP, not a heavy TCP connection

const users = await db.select().from(usersTable).all();3. The Edge Computing Cool-off

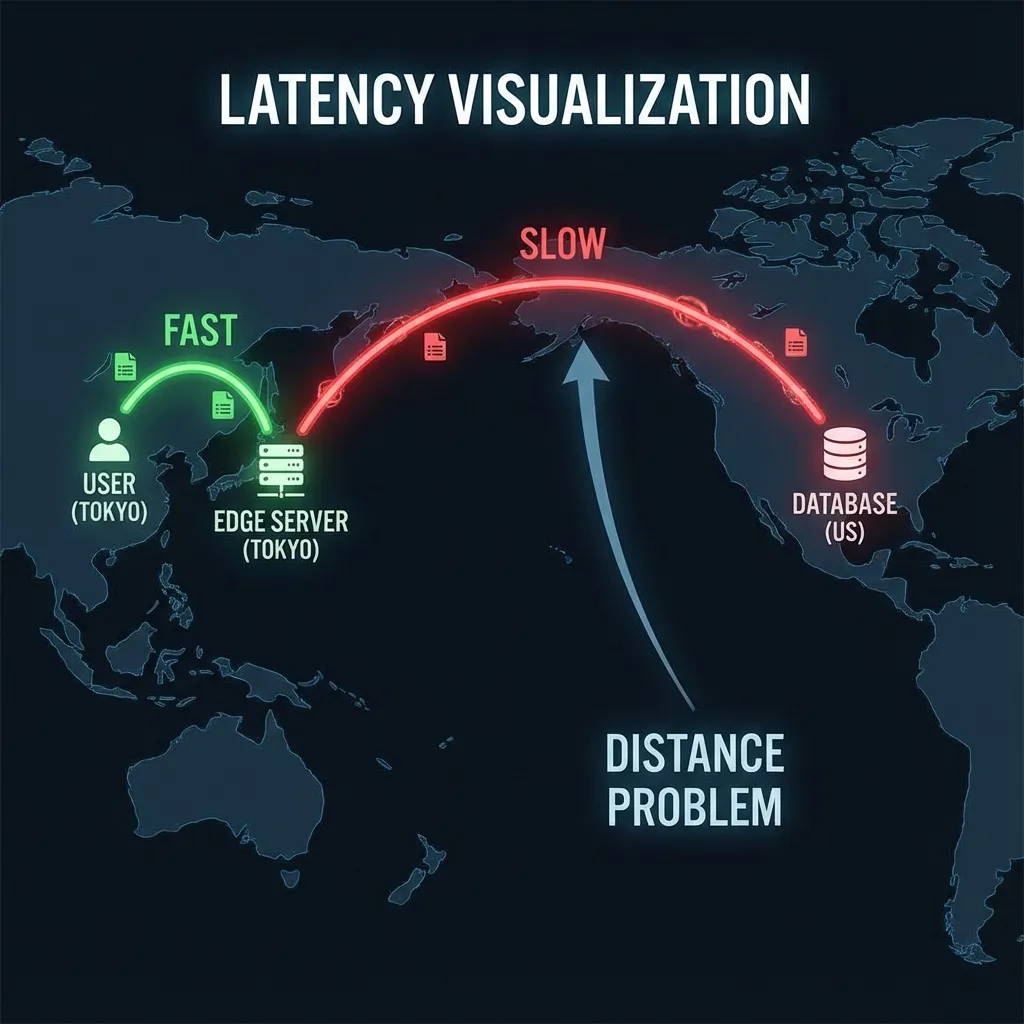

Two years ago, “Edge First” was the mantra—moving every bit of logic to Cloudflare Workers or Vercel Edge. In 2025, we’ve confronted a hard physical reality: The speed of light is constant.

If your database is in us-east-1 (Virginia) and your Edge Function is in Tokyo:

- User (Tokyo) -> Edge (Tokyo): Extremely fast (10ms).

- Edge (Tokyo) -> DB (Virginia): Extremely slow (200ms).

- DB -> Edge: Slow (200ms).

Total time: 400ms+. This is the Waterfall Problem.

Compare this to a traditional single-region setup (e.g., Virginia):

- User (Tokyo) -> Backend (Virginia): Slow (200ms).

- Backend (Virginia) -> DB (Virginia): Extremely fast (1ms, internal network).

- Backend -> User: Slow (200ms).

The total time is also around 400ms, but often faster due to connection reuse.

Unless you have achieved Global Database Replication (which is complex and introduces consistency delays), Compute near Data remains the performance optimal.

Edge computing is now returning to its strengths:

- Auth Verification: JWT validation and request filtering.

- Simple Routing: Redirecting users to the nearest primary region.

- Static Assets: HTML/CSS/JS/Images.

Core business logic (CRUD) is returning to centralized Regions.

4. The Rise of Full-stack Frameworks: The Death of BFF

We used to talk about the BFF (Backend for Frontend) pattern: creating a GraphQL or specialized API layer to serve different clients (iOS, Android, Web).

Modern full-stack frameworks like Next.js, Remix, and Nuxt have effectively internalized the BFF.

When you write a Server Action in Next.js, you are writing the BFF. You write backend logic right next to your component, import your ORM, and pass sanitized data directly. No RESTful URLs, no Swagger docs, no JSON schemas. The tight coupling (Co-location) of frontend components and data retrieval increases velocity tenfold.

“Backend development” today often isn’t about maintaining a standalone Golang/Java service; it’s about writing service-layer logic within the context of a full-stack framework.

Summary

The philosophy of backend architecture in 2025 is: Keep it Simple, Make it Scale.

- Default to Modular Monolith: Avoid premature microservices.

- Embrace Serverless DB: Offload the “dirty work” of DB management to specialized providers.

- Compute Near Data: Don’t blindly use the Edge unless you have the data locality to support it.

- Full-stack Frameworks First: Let your framework handle the routing and data ingestion layer.